Blogs

Learning together: how others can fuel (or hinder) our curiosity

How do the presence, gaze, and activity of others influence our motivation to learn and our curiosity?

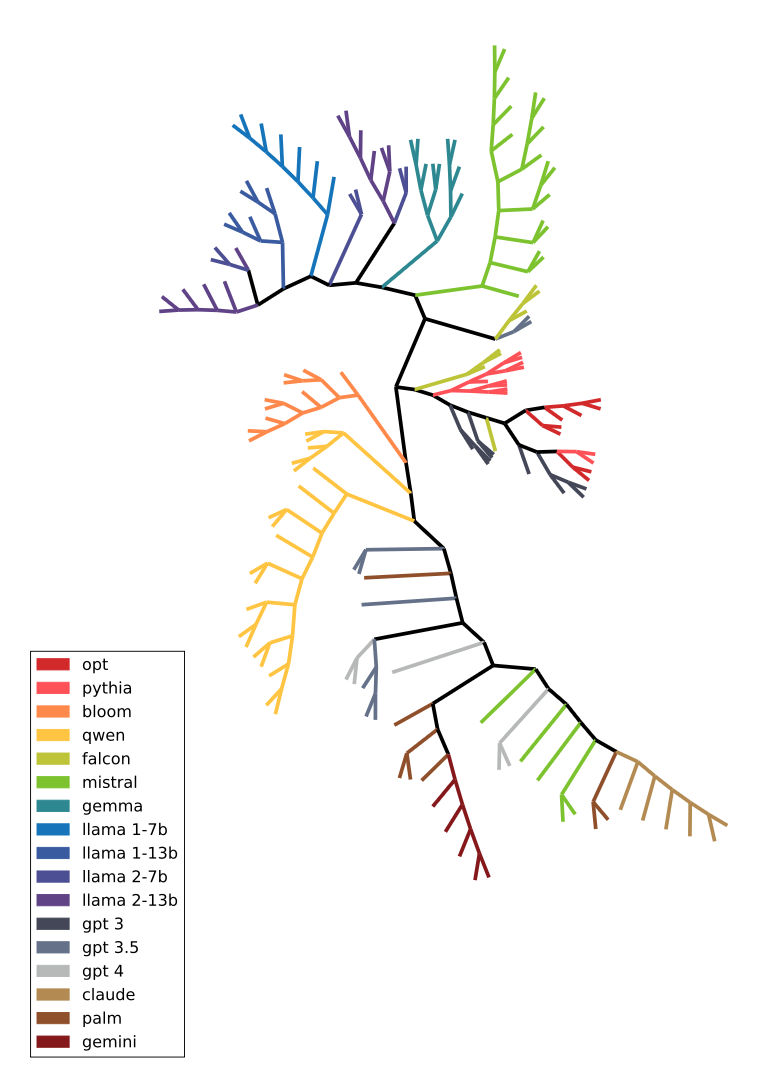

The Phylogenetics of Artifacts - A deep dive into the evolution of cultural objects, artificial life forms and language models

The blog shows that phylogenetic methods — originally designed to reconstruct evolutionary trees of biological species from DNA — can be extended to any system that replicates and varies. It demonstrates this progression across three domains - cat breeds as a concrete biological example, myths as a cultural one, and finally language models, where next-token probability distributions serve as artificial DNA to reconstruct the family trees of LLMs in a black-box setting.

Can AIs understand our world? Functionally grounding LLMs in interactive environments.

LLMs struggle to connect words to real-world concepts. We propose functional grounding via reinforcement learning in interactive environments, and demonstrate it with GLAM on BabyAI-Text.

An ecological approach to Artificial Intelligence

We explore how Human Behavioral Ecology can guide the pursuit of human-like AI, proposing a conceptual framework to bridge natural and artificial intelligence research.

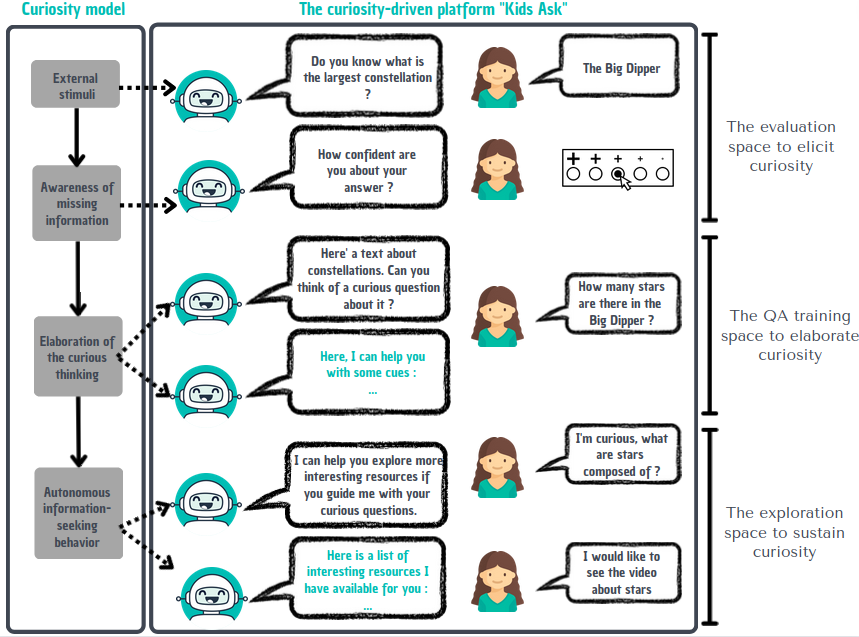

Designing artificial conversational agents to train children's curiosity during learning, a proof of concept through the Kids Ask project

Kids Ask designs educational technologies that stimulate epistemic curiosity in young learners, leveraging artificial conversational agents to support question-asking and self-directed learning.

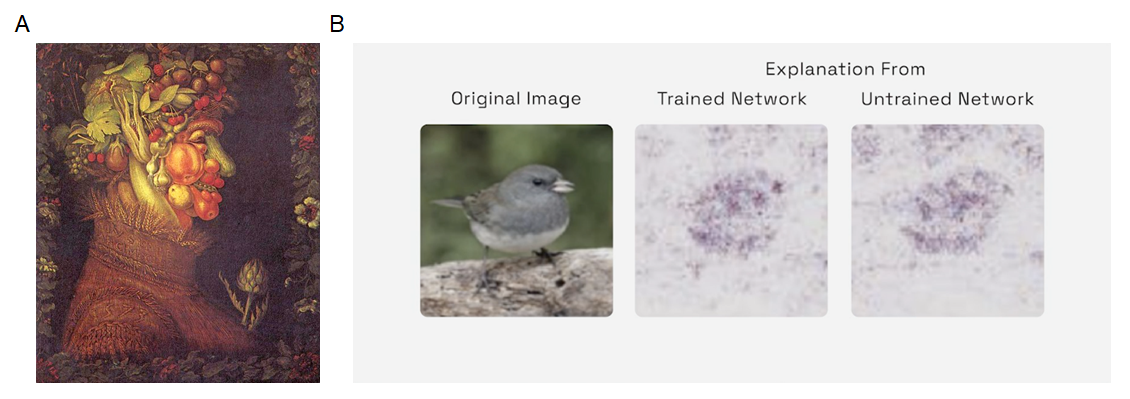

Watching artificial intelligence through the lens of cognitive science methodologies

Large language models are hard to interpret. We propose importing cognitive science methodologies to understand their functional representations, running controlled experiments inspired by cognitive neuroscience.

Guiding and Learning Without Common Ground: a New Interactive Learning Paradigm

Can machines teach and learn from each other without a shared protocol? We introduce the Architect-Builder Problem, a new interactive learning paradigm to investigate this question.

Learning Sensorimotor Agency in Cellular Automata

We learn cellular automaton rules that produce self-organizing agents with embodiment, individuality, and sensorimotor agency, using curriculum learning and diversity search on a differentiable CA.

Intelligent behavior depends on the ecological niche: Scaling up AI to human-like intelligence in socio-cultural environments

Human intelligence was shaped by socio-cultural ecological niches. Developmental AI — modeling infant learning through embodiment and intrinsic motivation — is a promising path toward human-like intelligence.

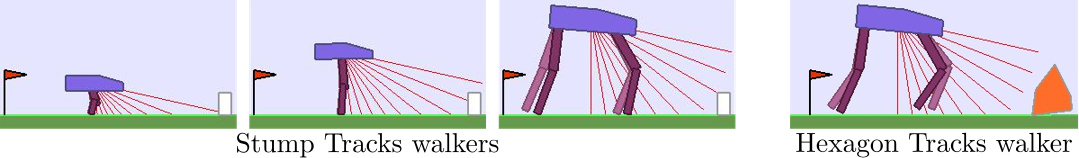

Teacher Algorithms for Deep RL Agents that Generalize in Procedurally Generated Environments

In this blog post we explore how one can leverage Automatic Curriculum Learning procedures to scaffold Deep Reinforcement Learning agents within complex continuous (procedurally generated) task spaces.

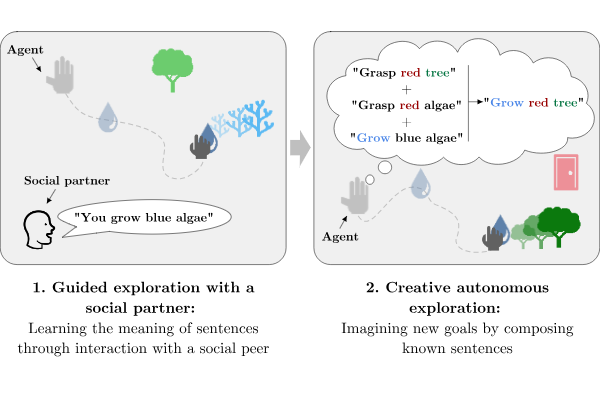

Language as a Cognitive Tool: Dall-E, Humans and Vygotskian RL Agents

Language structures cognition beyond communication. We advocate for Vygotskian embodied agents that use language as a cognitive tool to form abstract representations, reason, imagine goals, and plan.

Intrinsically Motivated Discovery of Diverse Patterns in Self-Organizing Systems

We automate discovery of diverse self-organized patterns in complex systems. Intrinsically-motivated goal exploration on a continuous Game of Life reveals a rich variety of emergent structures.

Intrinsically Motivated Modular Multi-Goal RL

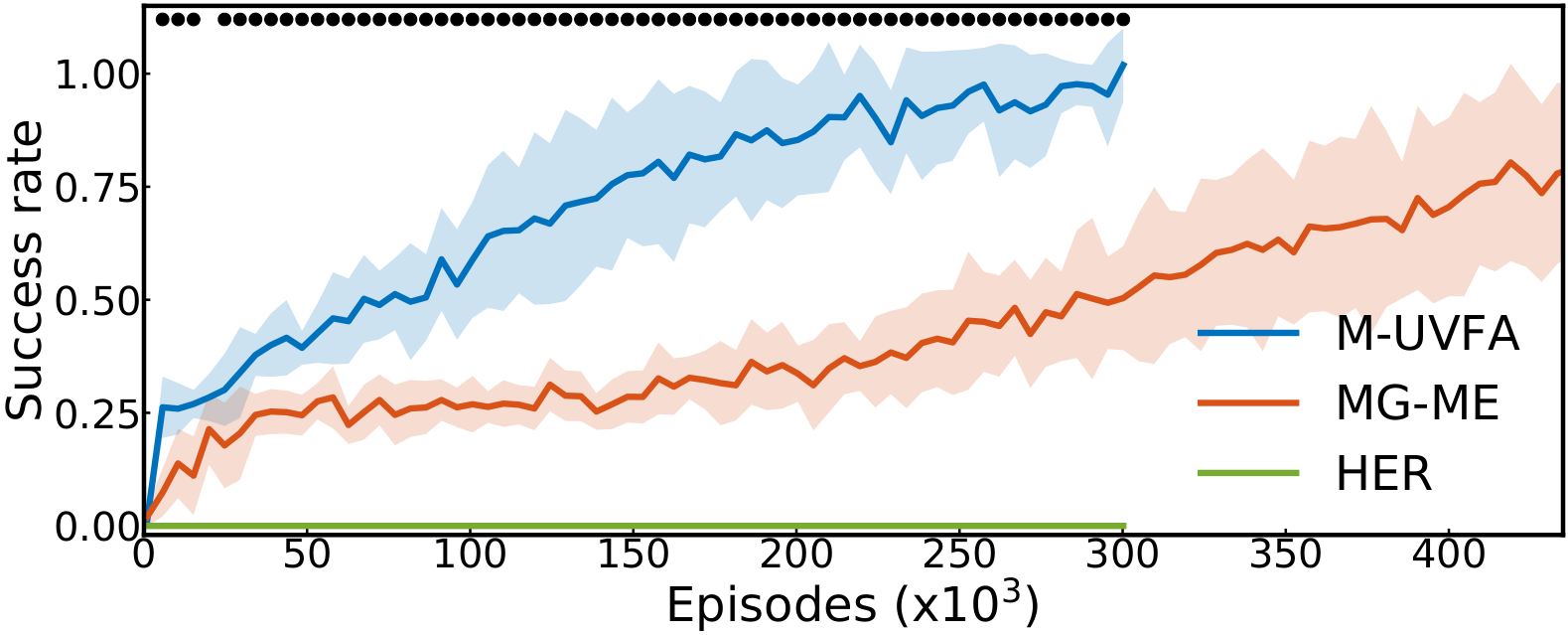

CURIOUS extends multi-goal RL to multiple task types in a single controller. Learning-progress-based intrinsic motivation builds an automatic curriculum, skipping impossible or already-mastered tasks.

Discovery of independently controllable features through autonomous goal setting

How can an agent autonomously discover and learn to represent its own goals? We explore developmental learning for open-ended multi-task acquisition without human-defined objectives.

How Many Random Seeds ?

A statistical guide for rigorous comparison of deep RL algorithms, addressing the reproducibility crisis through principled random seed usage and statistical testing.

Bootstrapping Deep RL with Population-Based Diversity Search

Deep RL suffers from inefficient exploration in sparse-reward settings. We decouple exploration and exploitation: diversity search collects varied trajectories, then deep RL bootstraps from them.